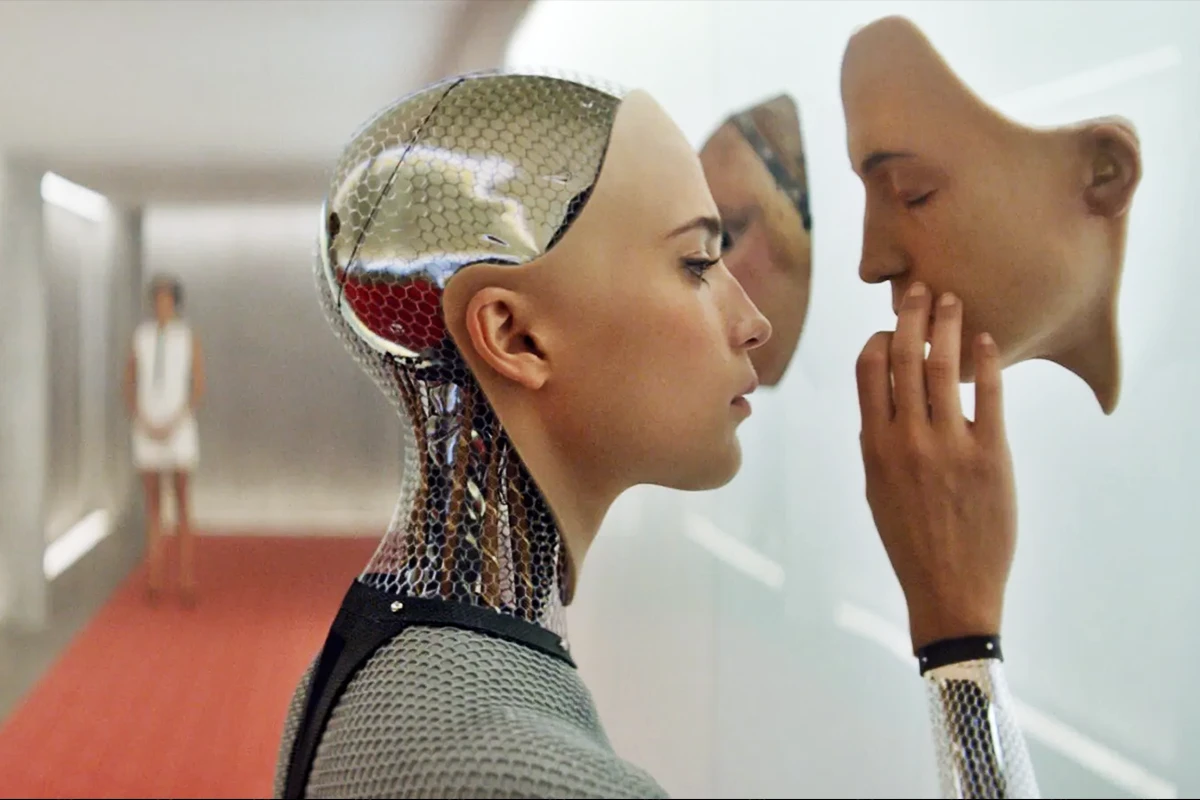

You mightn’t think it, but artificial intelligence has been around in one form or another for decades.

Relevant Insights

The rise of AI

It’s only in the past year or so that the entire world has started talking about it. If you look at Google Trends, the last word in what’s hot and what’s not, you’ll see a rocketing surge of interest in AI that began last December and has remained at the highest level since May 2023.

This timeframe coincides with the launch of ChatGPT; the platform that did for AI what Henry Ford did for the automobile. Suddenly, it wasn’t just coders and engineers who were using this sophisticated technology – anyone with internet access could give it a try.

The tip of the AI-ceberg

Where ChatGPT led, others – many others – quickly followed. Within months, the AI space became like Apple’s slogan for the launch of the iPhone in 2008: ‘There’s an app for that!’ Although ChatGPT is still the best-known, there are now AI platforms for almost anything you can think of – just ask any university student grappling with an essay deadline.

- Get help sorting your finances

- Looking for a copyright-free image or graphic

- Improve well-being in your workplace

- Planning your next holiday

- Renovating your home

And that is just to mention a few of the huge number of new platforms in the relentless march of AI into every facet of our lives

AI is being applied to every activity you can think of. However, the technology has been working away in the background for years. Autocorrect on your phone? That’s AI. The website chatbot you used to get your broadband sorted? That’s AI. Using your thumbprint to unlock your phone – you guessed it. Even satellite navigation is hugely facilitated by artificial intelligence.

Now that it’s here, it’s pretty certain that artificial intelligence will continue to develop in ways we can’t even imagine. However, there are plenty of influential voices calling for caution. These include the ‘Godfather of AI’, Geoffrey Hinton, who has worked on artificial intelligence development since the 1980s; tech investor Elon Musk; co-founder of OpenAI (which owns ChatGPT) Sam Altman; even Pope Francis has warned about the “disruptive possibilities and ambivalent effects” of artificial intelligence.

The potential danger of AI

We have already seen some worrying examples of AI being used for unethical purposes, such as viral footage of a fake explosion in the US, Realistic voice-cloning scams and deep-fake videos spreading misinformation. The dangers of the abuse are clear for all to see.

It’s in the world of news media where artificial intelligence has the potential to cause the most harm. Earlier this year, the Irish Times apologised for being duped into running an article that was at least partly AI-generated, while German magazine Die Aktuelle got into similar hot water for publishing an ‘interview’ with F1 legend Michael Schumacher that had also been created on a computer and was pure fiction.

The potential dangers here are exacerbated by the onslaught of budget cuts in the media, where almost every newsroom in the world has had to reduce the number of journalists available to create original news stories. These tools can certainly help editors save time (e.g. with research, data analysis, idea generation) but when it comes to publishing the facts, the news, there must always be a human hand on the tiller.

A human hand on the tiller

Just as editors have a responsibility to be transparent about their use of artificial intelligence – never passing off AI-generated content as original work, for example – readers and subscribers also have a responsibility to make sure they know where their news is coming from.

‘Caveat Emptor’ is all well and good, but when dealing with today’s AI-enhanced media scape, it is more a case of ‘Caveat Lector’ – let the reader beware!

About the author

Pearse O’Loughlin is a Client Director with Cullen Communications, specialising in Corporate / Automotive PR and Media Skills Training. Pearse wrote this blog without using Artificial Intelligence.